Commits on Source (1528)

Showing

- .gitignore 3 additions, 0 deletions.gitignore

- .gitlab-ci.yml 137 additions, 0 deletions.gitlab-ci.yml

- .spelling 850 additions, 0 deletions.spelling

- README.md 8 additions, 0 deletionsREADME.md

- docs.it4i/anselm/compute-nodes.md 132 additions, 0 deletionsdocs.it4i/anselm/compute-nodes.md

- docs.it4i/anselm/hardware-overview.md 68 additions, 0 deletionsdocs.it4i/anselm/hardware-overview.md

- docs.it4i/anselm/introduction.md 20 additions, 0 deletionsdocs.it4i/anselm/introduction.md

- docs.it4i/anselm/network.md 38 additions, 0 deletionsdocs.it4i/anselm/network.md

- docs.it4i/anselm/storage.md 427 additions, 0 deletionsdocs.it4i/anselm/storage.md

- docs.it4i/apiv1.md 3 additions, 0 deletionsdocs.it4i/apiv1.md

- docs.it4i/archive/archive-intro.md 50 additions, 0 deletionsdocs.it4i/archive/archive-intro.md

- docs.it4i/barbora/compute-nodes.md 146 additions, 0 deletionsdocs.it4i/barbora/compute-nodes.md

- docs.it4i/barbora/hardware-overview.md 67 additions, 0 deletionsdocs.it4i/barbora/hardware-overview.md

- docs.it4i/barbora/img/BullSequanaX.png 0 additions, 0 deletionsdocs.it4i/barbora/img/BullSequanaX.png

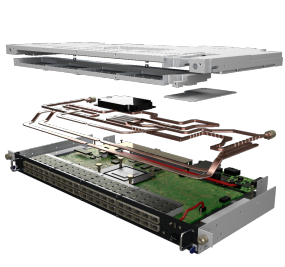

- docs.it4i/barbora/img/BullSequanaX1120.png 0 additions, 0 deletionsdocs.it4i/barbora/img/BullSequanaX1120.png

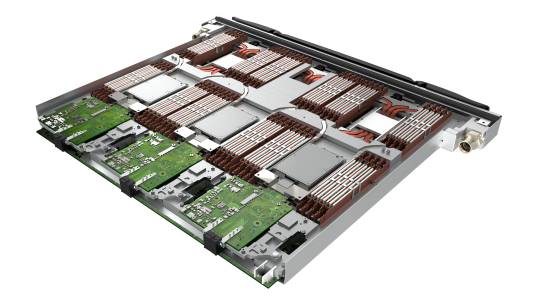

- docs.it4i/barbora/img/BullSequanaX410E5GPUNVLink.jpg 0 additions, 0 deletionsdocs.it4i/barbora/img/BullSequanaX410E5GPUNVLink.jpg

- docs.it4i/barbora/img/BullSequanaX808.jpg 0 additions, 0 deletionsdocs.it4i/barbora/img/BullSequanaX808.jpg

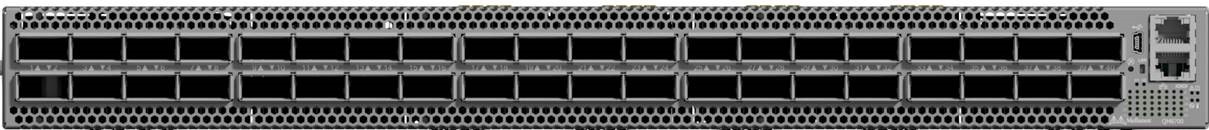

- docs.it4i/barbora/img/QM8700.jpg 0 additions, 0 deletionsdocs.it4i/barbora/img/QM8700.jpg

- docs.it4i/barbora/img/XH2000.png 0 additions, 0 deletionsdocs.it4i/barbora/img/XH2000.png

- docs.it4i/barbora/img/bullsequanaX450-E5.png 0 additions, 0 deletionsdocs.it4i/barbora/img/bullsequanaX450-E5.png

.gitignore

0 → 100644

.gitlab-ci.yml

0 → 100644

.spelling

0 → 100644

README.md

0 → 100644

docs.it4i/anselm/compute-nodes.md

0 → 100644

docs.it4i/anselm/hardware-overview.md

0 → 100644

docs.it4i/anselm/introduction.md

0 → 100644

docs.it4i/anselm/network.md

0 → 100644

docs.it4i/anselm/storage.md

0 → 100644

docs.it4i/apiv1.md

0 → 100644

docs.it4i/archive/archive-intro.md

0 → 100644

docs.it4i/barbora/compute-nodes.md

0 → 100644

docs.it4i/barbora/hardware-overview.md

0 → 100644

docs.it4i/barbora/img/BullSequanaX.png

0 → 100644

572 KiB

docs.it4i/barbora/img/BullSequanaX1120.png

0 → 100644

184 KiB

9.32 KiB

docs.it4i/barbora/img/BullSequanaX808.jpg

0 → 100644

4.31 KiB

docs.it4i/barbora/img/QM8700.jpg

0 → 100644

53.5 KiB

docs.it4i/barbora/img/XH2000.png

0 → 100644

74.7 KiB

docs.it4i/barbora/img/bullsequanaX450-E5.png

0 → 100644

33.4 KiB